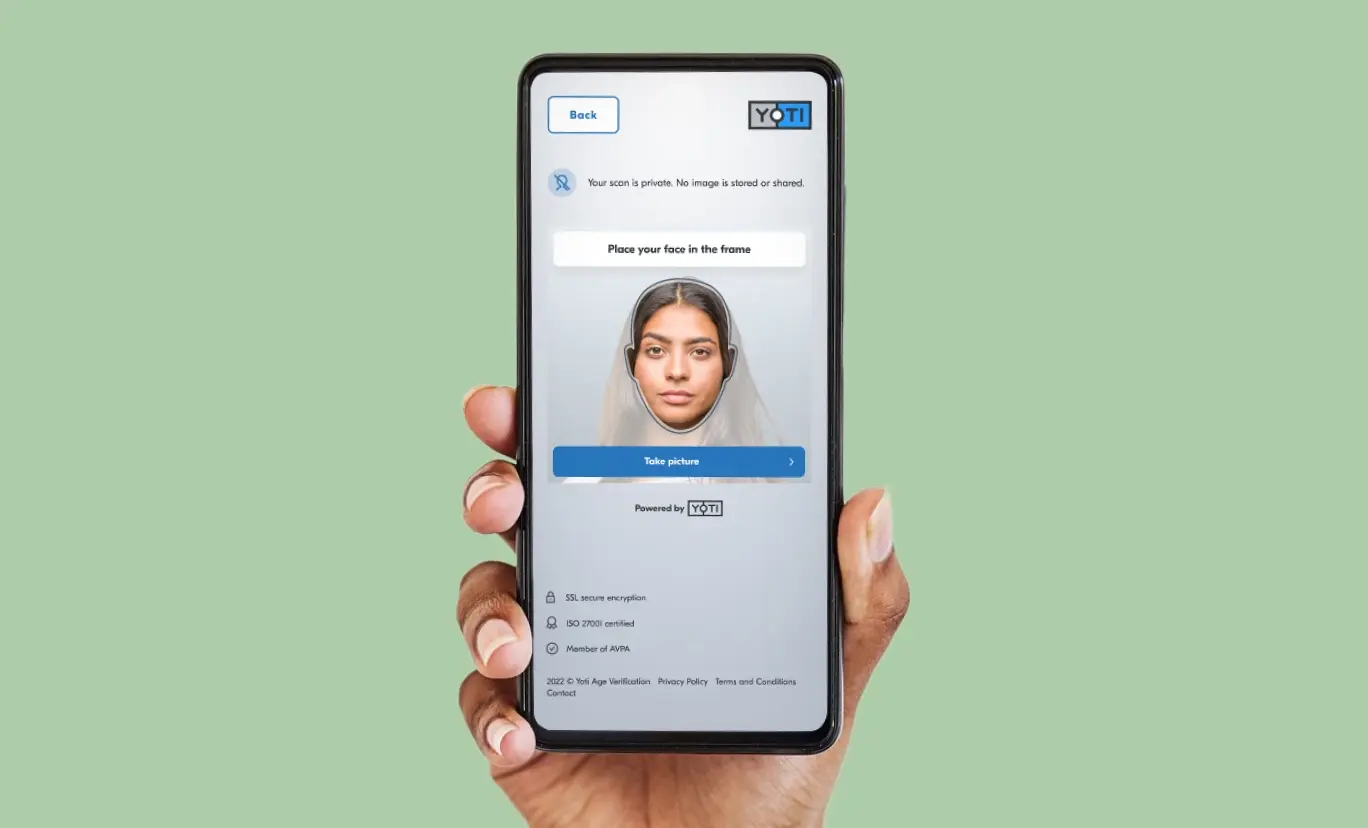

Yoti MyFace Match is what’s known as a 1:1 and 1:N face matching solution. The technology compares a single image with another image or set of images in real time to determine if it is the same person. Yoti licences this facial recognition technology to businesses wanting to be sure that, for example, the right person is accessing their online accounts.

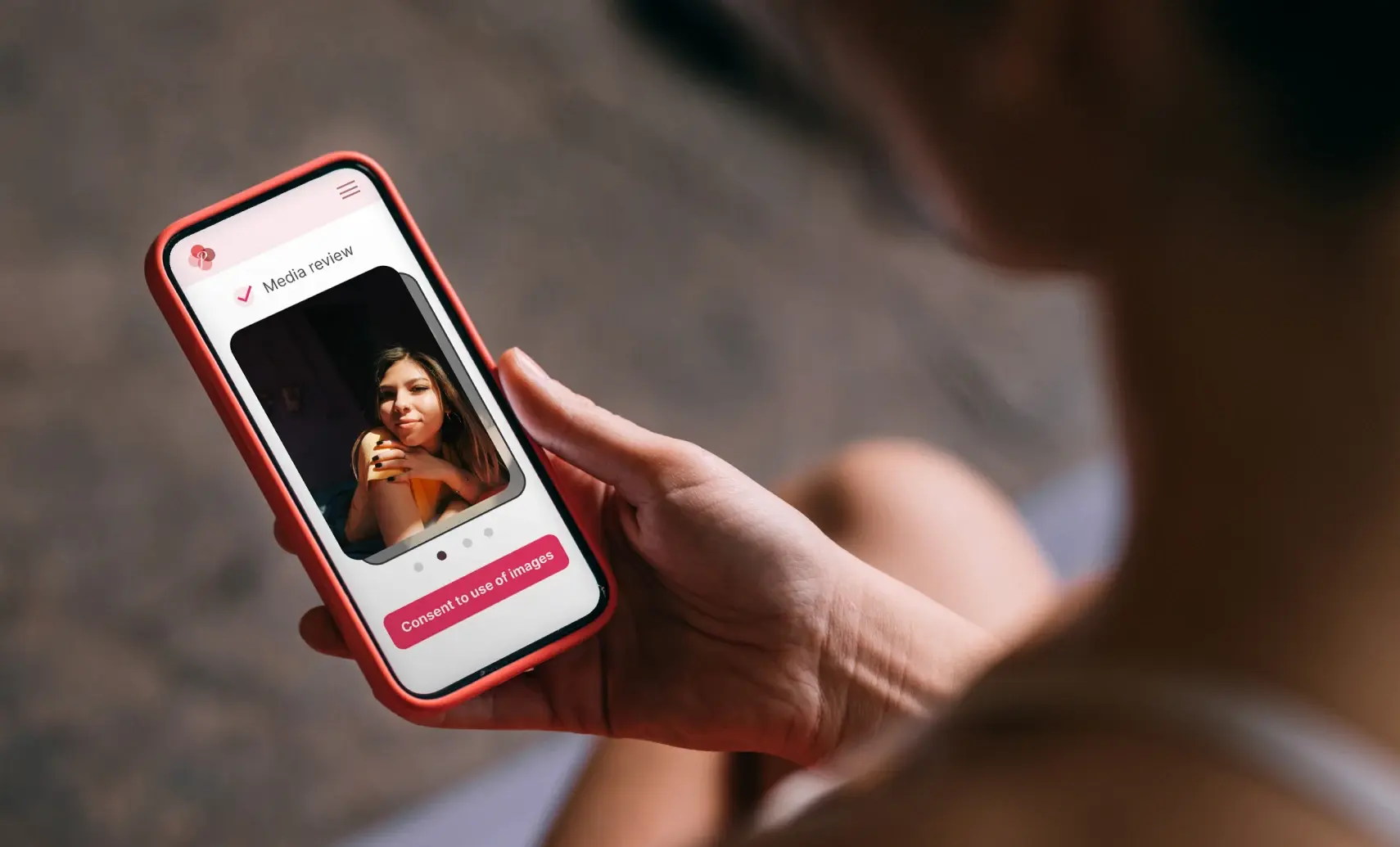

MyFace Match is also useful to businesses because they invite users to opt-in to be ‘verified’ and then be required to provide consent whenever their image or content is published. In the case of live streaming, businesses can monitor the stream to ensure only consented individuals appear and flag to moderators when an ‘unverified’ individual is detected.

Yoti MyFace Match can also be used to manage exclusion lists, where an individual may be banned from a platform for poor behaviour or breaching rules. For example, if a gaming platform bans a user and they then tried to open an alternative account, the Yoti service can match the selfie to an exclusion list and then the business can deny platform access to that user.

Until recently, our R&D team have been focusing on developing our world class facial age estimation and liveness models. It is only recently that we have added development of our own face matching models.

Even so, despite being a relatively new endeavour, our expert in-house R&D team’s experience in developing such models mean we have already achieved great success.

Facial recognition algorithms are measured by assessing the True Positive Rate (TPR) – when two separate facial images of the same person are correctly matched. Our latest (V3) model shows a TPR of 99.9%, a world class rate of accuracy.

Our models are optimised for visa or passport style selfies and images, those most commonly used for verification purposes.

Another key measure used to assess models is their False Positive Rate (FPR) – when a model incorrectly gives positive result, when it should not have matched two faces (belonging to two different people). This tends to be the most problematic situation for policy makers, organisations and indeed the general public.

Below you can see the results of our two models, V1 in orange and V3 in blue, where you can see the improvement between the two models. The graph shows the ROC (Receiver Operator Characteristic) curve. This plots TPR against varying tolerances for FPR to give an overall indication of a model’s performance. It enables us to view the model in a similar way to a cost (FPR) / benefit (TPR) analysis.

The y axis shows the TPR as a percentage, the x axis shows the FPR tolerance rate, where 10-6 is 1 in a million, 10-5 is 1 in 100,000 and so on.

The most important measure in the graph is the TPR at a FPR of 10(-6) – that is an TPR of 99.9% with FPR of 1 in a million.

This means 999 out of 1,000 users would be correctly matched and 1 would be flagged to the platform for further checks. This accuracy is hugely helpful to to operators and moderation teams. At the same time, only 1 user in 1 million would be incorrectly matched.

Our V1 model is currently under evaluation by the National Institute of Standards and Technology (NIST). NIST conducts the globally-recognised Face Recognition Vendor Test (FRVT), evaluating the performance of models across different age, gender and skin tones. We are expecting the results imminently. We will then submit our next model, or an improved version of it, to NIST for evaluation in June 2024.

By developing our own in-house face matching solution, we can customise the technology for different business requirements and enhance our identity verification capabilities.